Tag: HCI

This is a picture of me completely and unapologetically engrossed in a game of Space Invaders on a VIC 20. Here’s an early commercial for it, featuring the one and only William Shatner.

This is a picture of me completely and unapologetically engrossed in a game of Space Invaders on a VIC 20. Here’s an early commercial for it, featuring the one and only William Shatner.

Several weeks ago the team at the Media Archeology Lab (MAL) celebrated their accomplishments to date by hosting an event – called a MALfunction – for the community. Attendees include founders of local startups, the Dean of the College of Arts and Sciences of the University of Colorado, students that are interested in computing history, and a few other friends. The vibe was electric – not because there were any open wires from the machines – because this was truly a venue and a topic that is a strong intersection between the university and the local tech scene.

Recently, Amy and I underwrote the Human Computer Interaction lab at Wellesley University. We did so not only because we believe in facilitating STEM and IT education for young women, but also because we both have a very personal relationship to the university and to the lab. Amy, on a weekly basis, speaks to the impact that Wellesley has on her life. I, obviously, did not attend Wellesley but I have a very similar story. My interest in technology came from tinkering with computers, machines, and software in the late 1970s and early 1980s, just like the collection that is curated by the MAL.

Because of this, Amy and I decided to provide a financial gift to the MAL as well as my entire personal computer collection which included an Apple II (as well as a bunch of software for it), a Compaq Portable (the original one – that looks like a sewing machine), an Apple Lisa, a NeXT Cube, and my Altair personal computer.

Being surrounded by these machines just makes me happy. There is a sense of joy to be had from the humming of the hard drives, the creaking of 30-year old space bars, and squinting at the less than retina displays. While walking back to my condo from the lab, I think I pinned down what makes me so happy while I’m in the lab. An anachronistic experience with these machines are: (1) a reminder of how far we have come with computing, (2) a reminder to never take computing for granted – it’s shocking what the label “portable computer” was applied to in 1990, and (3) a perspective of how much further we can innovate.

My first real computer was an Apple II. I now spend the day in front of an iMac, a MacBook Air, and an iPhone. When I ponder this, I wonder what I’ll be using in 2040? The experience of the lab is one of true technological perspective and those moments of retrospection make me happy.

In addition, I’m totally blown away by what the MAL director, Lori Emerson, and her small team has pulled off with zero funding. The machines at MAL are alive, working, and in remarkably good shape. Lori, who teaches English full time at CU Boulder, has created a remarkable computer history museum.

Amy and I decided to adopt MAL, and the idea of building a long term computer history museum in Boulder, as one of our new projects. My partner Jason Mendelson quickly contributed to it. If you are up for helping us ramp this up, there are three things you can do to help.

1. Give a financial gift via the Brad Feld and Amy Batchelor Fund for MAL (Media Archeaology Lab).

2. Contribute old hardware and software, especially stuff that is sitting in your basement.

3. Offer to volunteer to help get stuff set up and working.

If you are interested in helping, just reach out to me or Lori Emerson.

Amy and I just underwrote the renovation of Wellesley College’s new Human-Computer Interaction Lab. The picture above is a screen capture of the Wellesley College home page today (called their “Daily Shot” – they change the home page photo every day) with a photo from yesterday when Amy did the ribbon cutting on the HCI Lab.

Amy went to Wellesley (graduated in 1988) and she regularly describes it as a life changing experience. She’s on the Wellesley College Board of Trustees and is in Boston this week for a board meeting (which means I’m on dog walking duty every day.) I’m incredibly proud of her involvement with Wellesley and it’s easy to support the college, as I think it’s an amazing place.

The Wellesley HCI Lab also intersects with my deep commitment to getting more women engaged in computing. As many of you know, I’m chair of National Center for Women & Information Technology. When Amy asked if I was open to underwriting the renovation, the answer was an emphatic yes!

I’m at a Dev Ops conference today being put on by JumpCloud (I’m an investor) and SoftLayer. It’s unambiguous in my mind that the machines are rapidly taking over. As humans, we need to make it easy for anyone who is interested to get involved in human-computer interaction, as our future will be an integrated “human-computer” one. This is just another step in us supporting this, and I’m psyched to help out in the context of Wellesley.

Amy – and Wellesley – y’all are awesome.

My partner Jason Mendelson sent me a five minute video from Wired that shows how a Telsa Model S is built. I watched from my condo in downtown Boulder as the sun was coming up and thought some of the images were as beautiful a dance as I’ve ever seen. The factory has 160 robots and 3000 humans and it’s just remarkable to watch the machines do their thing.

As I watched a few of the robots near the end, I thought about the level of software that is required for them to do what they do. And it blew my mind. And then I thought about the interplay between the humans and machines. The humans built and programmed the machines which work side by side with the humans building machines that transport humans.

Things are accelerating fast. The way we think about machines, humans, and the way the interact with each other is going to be radically different in 20 years.

One of the places new approaches to human-computer interaction plays out is with video games. One company – Harmonix – has been working on this for 18 years.

Harmonix, which is best known for Rock Band, is also the developer of three massive video game franchises. The first, Guitar Hero, was the result of almost a decade of experimentation that resulted in the first enormous hit in the music genre in the US. Virtually everyone I know remembers the first time they picked up a plastic guitar and played their first licks on Guitar Hero. Two years later, Rock Band followed, taking the music genre up to a new level, and being a magnificent example of a game that suddenly absorbed everyone in the room into it. Their more recent hit, Dance Central, demonstrated how powerfully absorbing a human-based interface could be, especially when combined with music, and is the top-selling dance game franchise for the Microsoft Kinect.

Last fall, Alex Rigopulos and his partner Eran Egozy showed me the three new games they were working on. Each addressed a different HCI paradigm. Each was stunningly envisioned. And each was magic, even in its rough form. Earlier this year I saw each game again, in a more advanced form. And I was completely and totally blown away – literally bouncing in my seat as I saw them demoed.

So – when Alex and Eran asked me if I’d join their board and help them with this part of their journey, I happily said yes. It’s an honor to be working with two entrepreneurs who are so incredibly passionate and dedicated to their craft. They’ve built, over a long period of time, a team that has created magical games not just once, but again and again. And they continue to push the boundaries of human-computer interaction in a way that impacts millions of people.

I look forward to helping them in whatever way I can.

Orbotix just released a new version of the Sphero firmware. This is a fundamental part of our thesis around “software wrapped in plastic” – we love investing in physical products that have a huge, and ever improving, software layer. The first version of the Sphero hardware just got a brain transplant and the guys at Orbotix do a brilliant job of showing what the difference is.

Even if you aren’t into Sphero, this is a video worthwhile watching to understand what we mean as investors when we talk about software wrapped in plastic (like our investments in Fitbit, Sifteo, and Modular Robotics.)

When I look at my little friend Sphero, I feel a connection to him that is special. It’s like my Fitbit – it feels like an extension of me. I have a physical connection with the Fitbit (it’s an organ that tracks and displays data I produce). I have an emotional connection with Sphero (it’s a friend I love to have around and play with.) The cross-over between human and machine is tangible with each of these products, and we are only at the very beginning of the arc with them.

I love this stuff. If you are working on a product that is software wrapped in plastic, tell me how to get my hands on it.

As the endless stream of emails, tweets, and news comes at me, I find myself going deeper on some things while trying to shed others. I’ve been noticing an increasing amount of what I consider to be noise in the system – lots of drama that has nothing to do with innovation, creating great companies, or doing things that matter. I expect this noise will increase for a while as it always does whenever enthusiasm for startups and entrepreneurship increases. When that happens, I’ve learned that I need to go even deeper into the things I care about.

As the endless stream of emails, tweets, and news comes at me, I find myself going deeper on some things while trying to shed others. I’ve been noticing an increasing amount of what I consider to be noise in the system – lots of drama that has nothing to do with innovation, creating great companies, or doing things that matter. I expect this noise will increase for a while as it always does whenever enthusiasm for startups and entrepreneurship increases. When that happens, I’ve learned that I need to go even deeper into the things I care about.

My best way of categorizing this is to pay attention to what I’m currently obsessed about and use that to guide my thinking and exploration. This weekend, as I was finally catching up after the last two weeks, I found myself easily saying no to a wide variety of things that – while potentially interesting – didn’t appeal to me at all. I took a break, grabbed a piece of paper, and scribbled down a list of things I was obsessed about. I didn’t think – I just wrote. Here’s the list.

- Startup communities

- Hci

- Human instrumentation

- 3d printing

- User generated content

- Integration between things that make them better

- Total disruption of norms

If you are a regular reader of this blog, I expect none of these are a surprise to you. When I reflect on the investments I’m most involved in, including Oblong, Fitbit, MakerBot, Cheezburger, Orbotix, MobileDay, Occipital, BigDoor, Yesware, Gnip, and a new investment that should close today, they all fit somewhere on the list. And when I think of TechStars, it touches on the first (startup communities) and the last (total disruption of norms).

I expect I’ll go much deeper on these over the balance of 2012. There are many other companies in the Foundry Group portfolio that fit along these lines, especially when I think about the last two. Ultimately, I’m fascinated about stuff that “glues things today” while “destroying the status quo.”

What are you obsessed about? And are you spending all of your time on it?

I believe that science fiction is reality catching up to the future. Others say that science fact is the science fiction of the past. Regardless, the gap between science fact and science fiction is fascinating to me, especially as it applies to computers.

I believe that science fiction is reality catching up to the future. Others say that science fact is the science fiction of the past. Regardless, the gap between science fact and science fiction is fascinating to me, especially as it applies to computers.

My partners and I spend time at CES each year along with a bunch of the founders from different companies we’ve invested in due to our human computer interaction theme. In addition to a great way to start the year together, it gives us a chance to observe how the broad technology industry, especially on the consumer electronics side, is trying to catch up to the future.

We are investors in Oblong, a company who’s co-founder (John Underkoffler) envisions much of the future we are currently experiencing when he created the science and tech behind the movie Minority Report. Oblong’s CEO, Kwin Kramer, wandered the floor of CES with this lens on and had some great observations which he shares with you below.

Looking back at last year’s CES through the greasy lens of this year’s visit to Vegas, three trends have accelerated: tablets, television apps platforms, and new kinds of input.

I gloss these as “Apple’s influence continuing to broaden”, “a shift from devices to ecosystems,” and “the death of the remote control.”

Really, the first two trends have merged together. The iPod, iPhone, and iPad, along with iTunes, AirPlay, and FaceTime, have profoundly influenced our collective expectations.

All of the television manufacturers are now showing “smart” TV prototypes. “Smart” means some combination of apps, content purchases, video streaming, video conferencing, web browsing, new remote controls, control from phones and tablets, moving content around between devices, screen sharing between devices, home “cloud”, face recognition, voice control, and gestural input.

Samsung showed the most complete bundle of “smart” features at the show this year and is planning to ship a new flagship television line that boasts both voice and gesture recognition.

This is good stuff. The overall interaction experience may or may not be ready for the mythical “average user”, but the features work. (An analogy: talking and waving at these TVs feels like using a first-generation PalmPilot, not a first-generation iPhone. But the PalmPilot was a hugely successful and category changing product.)

The Samsung TVs use a two-dimensional camera, not a depth sensor. As a result, gestural navigation is built entirely around hand motion in X and Y and open-hand/grab transitions. The tracking volume is roughly the 30 degree field of view of the camera between eight feet and fifteen feet from the display.

Stepping back and filtering out the general CES clamor, what we’re seeing is the continuing, but still slow, coming to pass of the technology premises on which we founded Oblong: pixels available in more and more form factors, always-on network connections to a profusion of computing devices, and sensors that make it possible to build radically better input modalities.

Interestingly, there are actually fewer gestural input demos on display at CES this year than there were last year. Toshiba, Panasonic and Sony, for example, weren’t showing gesture control of TVs. But it’s safe to assume that all of these companies continue to do R&D into gestural input in particular, and new user experiences in general.

PrimeSense has made good progress, too. They’ve taken an open-hand/grab approach that’s broadly similar to Samsung’s, but with good use of the Z dimension in addition. The selection transitions, along with push, pull and inertial side-scroll, feel solid.

Besides the television, the other interesting locus of new UI design at CES is the car dashboard. Mercedes showed off a new in-car interface driven partly by free-space gestures. And Ford, Kia, Cadillac, Mercedes and Audi all have really nice products and prototypes and employ passionate HMI people.

For those of us who pay a lot of attention to sensors, the automotive market is always interesting. Historically, adoption in cars has been one important way that new hardware gets to mass-market economies of scale.

The general consumer imaging market continues to amaze me, though. Year-over-year progress in resolution, frame rate, dynamic range and cost continues unabated.

JVC is showing a 4k video camera that will retail for $5,000. And the new cameras (and lenses) from Nikon and Canon are stunning. There’s no such thing anymore as “professional” equipment in music production, photography or film. You can charge all the gear you need for recording an album, or making a feature-length film, on a credit card.

Similarly, the energy around the MakerBot booth was incredibly fun to see. Fab and prototyping capabilities are clearly on the same downward-sloping, creativity-enabling, curve as cameras and studio gear. I want a replicator!

And, of course, I should say that Oblong is hiring. We think the evolution of the multi-device, multi-screen, multi-user future is amazingly interesting. We’re helping to invent that future and we’re always looking for hackers, program managers, and experienced engineering leads.

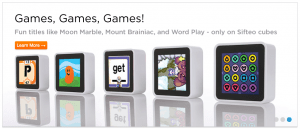

If you follow our investments, you know that one of our core themes is Human Computer Interaction. The premise behind this theme is that the way humans interact with computers 20 years from now will make the way we interact with them today look silly. We’ve made a number of investments in this area with recent ones including Fitbit, Sifteo, Orbotix, Occipital, and MakerBot.

Last week Bloomberg did a nice short piece on Sifteo. I’m always intrigued on how mainstream media presents new innovations like Sifteo in a five minute segment. It’s hard to get it right – there’s a mixture of documentary, interview, usage of the product, and explanation of why it matters, all crammed into a few minutes combined with some cuts of the company, founders, and some event (in this case a launch event.)

I find the Sifteo product – and the Sifteo founders – to be amazing. They have a lot of the same characteristics of the other founders of the companies in our HCI theme – incredibly smart, creative, and inventive technologists who are obsessed with a particular thing at the boundary of the interaction between humans and computers.

We know that these are risky investments – that’s why we make them. As we’ve already seen with companies like Oblong and Fitbit it’s possible to create a company based on an entirely new way of addressing an old problem, product, or experience with a radically different approach to the use, or introduction, of technology. Having played extensively with the beta version of the Sifteo product, I’m optimistic that they are on this path.

If this intrigues you, order a set of Sifteo Cubes today (it has just started shipping.) In the mean time, enjoy the video, and our effort to help fund the entrepreneurs who are trying to change the way humans and computers interact with each other.

Orbotix, one of our investments (and a TechStars Boulder 2010 company) is looking for an iOS and an Android developer.

If you don’t know Orbotix, they make Sphero, the robotic ball you control with your smartphone. And if you you wonder why you should care, take a look at Sphero on his chariot being driven by Paul Berberian (Orbotix CEO) while running Facetime.

I got the following note from Adam Wilson, the co-founder of Orbotix – if you fit this description email jobs@orbotix.com

We are looking for two new full time positions to fill as soon as possible. We need talented iOS and Android Developers that are not afraid of a little hard work and a little hardware! You must have an imagination. No previous robotics experience necessary but it doesn’t hurt. We want someone that can help make an API, low level protocols, implement games and work on other research and development tasks for Sphero. We expect some level of gaming history and previous experience in the field. There are online Leaderboards and some side tasks include coding up demonstration apps for our numerous interviews, conventions and for fun! We pay well, have plenty of food and beverage stocked including beer, redbull and the famous hot-pockets, are in downtown Boulder and literally play with robots all day/night long. Read our full jobs posting at https://www.orbotix.com/jobs/ for more info. Take a chance…. email me at jobs@orbotix.com.